ATLAS e-News

23 February 2011

Simulation strategy for first data

2 June 2008

We are getting closer and closer to the moment in which finally the LHC will deliver the proton-proton collisions we have been eagerly waiting for. We have been preparing ourselves for many years for this event, but it is now time to really finalise our efforts. In this view, in parallel with the well known CSC notes enterprise, a lot of recent work went into defining which Monte Carlo samples (fast and/or full simulation) have to be ready for when we start collecting data.

A group was created last year with a precise mandate defined by the ATLAS Physics Coordination to fulfil this task. The group is chaired by us (Shoji Asai and Marina Cobal) and includes a representative from each of the physics groups. The first meeting took place on the 30th of July 2007. Since then, we had 17 other meetings and several informal discussions which involved all the physics and combined performance groups. This long and fruitful work of “mediation” between all the needs and wishes expressed by the various communities brought the definition of a list of calibration and physics Monte Carlo samples to be generated, with strictly defined priorities.

The so-called 2008 samples production will start on June 1st, and – as decided quite recently- will concentrate on the 10 TeV scenario. There are only two months before data taking and during these two months, the CPU-equivalent of 15 million events with full Geant 4 simulation can be generated. The events generation will be shared between full simulation with Geant4 and fast simulation with ATLFAST-II. The level of sharing will be driven by the ATLFAST-II performances which are now being studied by the Simulation Strategy group, within a project called “Benchmark samples test”.

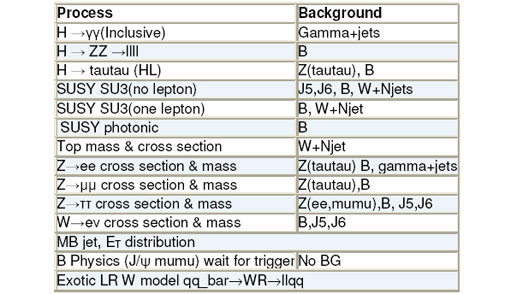

A physics-oriented validation of the simulators is performed using large scale samples (0.1-1M of events), by looking carefully at various critical distributions such as missing ET and jet energy scale, depending on the process under study. For this purpose, 14 benchmark physics processes have been identified, with their relative backgrounds. The list is given in the table below.

These samples are produced using different simulations:

- Geant4 full simulation

- ATLFASTII

- ATLFASTII adding the AOD to AOD corrections, which are applied offline to get back to the same distributions obtained in full simulation.

The exercise consists in applying the CSC analysis selection criteria to the signal and the relevant background processes and comparing (B) and (C) with (A) to see when it is possible to use fast simulation instead of full simulation. Many samples are ready and people started doing careful comparisons

Thanks to all the information collected so far and to the experience we are gaining with the benchmark samples test, the full list for the 2008 Monte Carlo production is almost final (it has been presented already twice at the Physics Coordination meeting). The amount of Monte Carlo data to be produced for the 2008 data taking listed up to now corresponds to about 15M events of “top priority” samples needed for all the various calibration studies (like single particle guns and low mass Drell Yan or J/Psi) and another 0.75M events of second priority samples. For the more general physics sample (jets, Minimum Bias, QCD W+jets etc.), we have listed about 8.9M top priority events and about 17M second priority events (but some of these processes will be moved to ATLFAST-II).

For the production of samples needed for specific analyses within the various physics groups, the CPU-equivalent of about 0.5M Geant4 events have been reserved. Each physics group will decide how to use this quota. If you are interested in more details, please have a look at our wiki page, where all the relevant information is collected and kept up-to-date.

We want to take this opportunity to thank not only the task force members but also all those who contributed and helped fulfill this task.

Marina CobalINFN Trieste and University of Udine, Italy |

Shoji AsaiUniversity of Tokyo, Japan

|